The Case for Verified Media

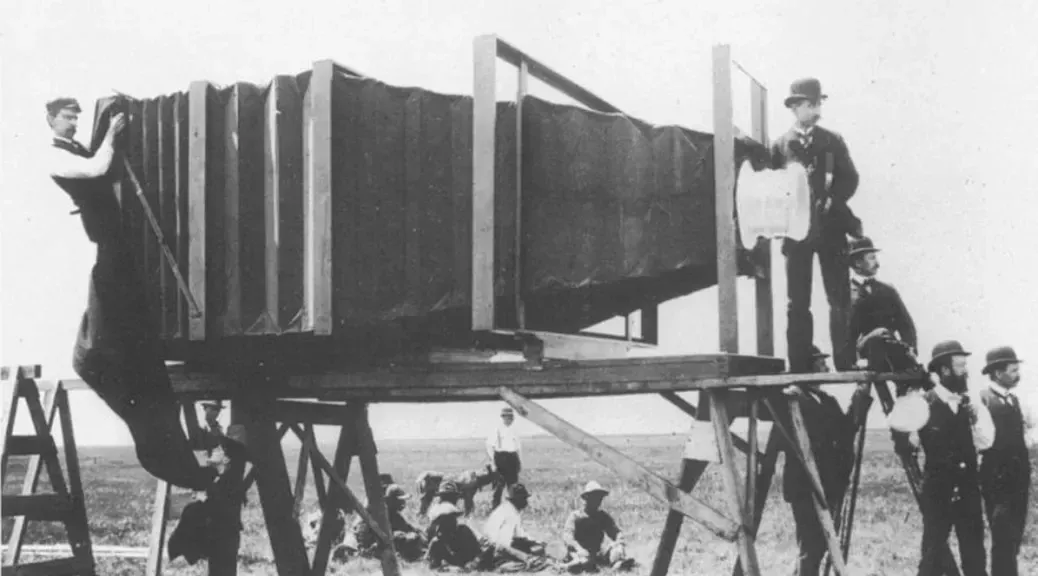

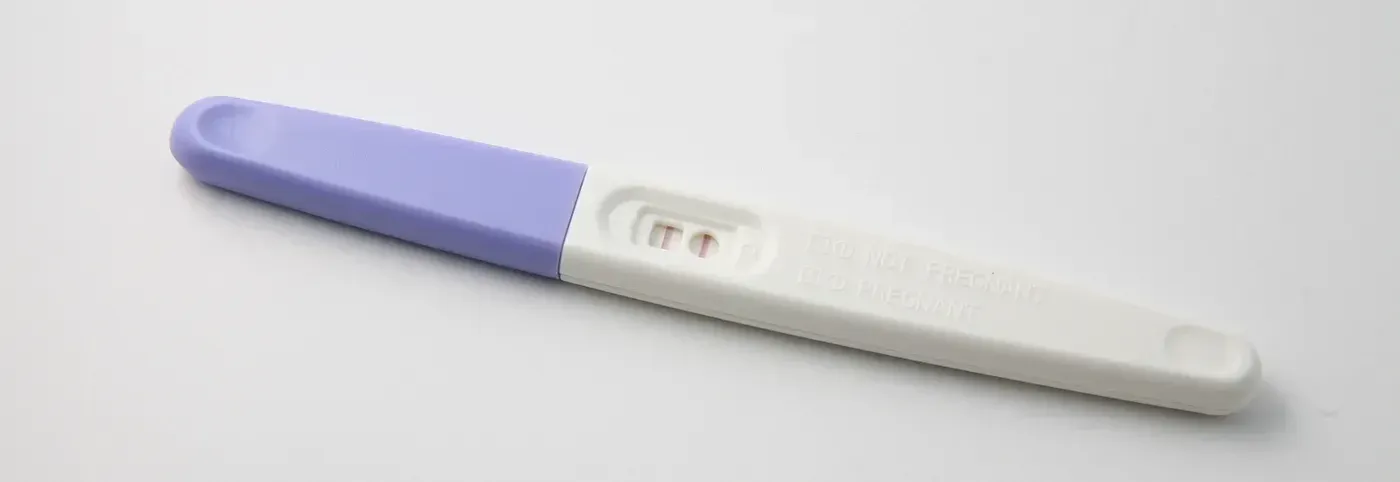

Real photograph, fake story.

Real photograph, fake story.

Since the invention of the camera, humans have been taking advantage of the fact that we respond viscerally to media. Put simply: Pics, or it didn’t happen. (By which we mean, if pics, then it definitely DID happen). Not only is a picture worth a thousands words, but our brains are hardwired to trust our own senses. (More importantly, they can’t be convinced otherwise, even when we KNOW we’ve been fooled).

The power of the computer, especially since the introduction of ubiquitous digital cameras and the rise of Adobe Photoshop, has allowed us to subvert that implicit trust. First, it was practical effects and then CGI in movies; more recently, has been the rise of the deepfake.

Synthetic media is now a staple of both crime and warfare around the globe: Insurance fraud, identity theft, disinformation campaigns, revenge pornography, defamation, and more: the underlying weapon is the ability for us to convince one another that a fake, isn’t. More insidious still, every new deepfake causes a second harm: it erodes our confidence in the evidence of our senses. This is called the “Liar’s Dividend”, and it arms despots and dictators with a get-out-of-jail-free card. “That was never me in that video,” they cry. “It must be a fake.”

Current efforts in the fight against synthetic media focus on the problem of detection — can we analyze a photo or video and tell if it’s a genuine article? This approach has served us well to date, but as deepfakes become the prevailing approach to producing synthetic media, detection is no longer enough.

Firstly, detection will never been 100% accurate. DARPA has funded tens of millions of dollars of work in this area, yet each generation of detection tech becomes less reliable over time. Detection methods can’t keep pace with advancements in generation approaches, leading to two different problems: false negatives, and false positives.

Which is Worse: False Positive, or False Negative?

Which is Worse: False Positive, or False Negative?

False positives are an administrative problem for media platforms such as Youtube, Instagram, or Whatsapp, and a resource problem for law enforcement. In the worst cases, media that is wrongly labelled as false can trigger a backlash of censorship claims and “Deep State” conspiracy theories. Even in the best case, a system with a high rate of false positives will quickly be ignored. (Think of an insurance company where 5–10% of car accident photos are wrongly flagged as “synthetic”).

But false negatives are even worse. Imagine what would have happened if the deepfake video of Zelensky’s surrender to Putin was wrongly flagged as “Not Fake”?

Given how deepfakes are actually generated, there’s a third problem with detection — every system that is built to detect deepfakes can immediately be weaponized to MAKE deepfakes that avoid this system. (The underlying technology is called GANs, and its one of the most powerful forms of AI developed in recent years).

We can avoid this third problem by keeping the underlying mechanisms of detection secret; while DARPA made the mistake of publishing their earlier detection work as open source, to their credit most commercial detection companies take this approach. But this introduces an additional problem — naysayers won’t trust a detection mechanism that isn’t exposed to public scrutiny, and we’re back to the Liar’s Dividend again.

The solution to this problem is simple to define, and hard to achieve: We need a global standard for “authentic” media. That authenticity must be established at the moment of capture — the click of the proverbial shutter. And it must be tamperproof — based on mathematics, not on trusted partners.

Want to see Polyguard in action?

Experience real-time identity verification for your communication security.